The Context Gap: From Integration to Discovery

Article 1: The Context Gap — From Integration to Discovery

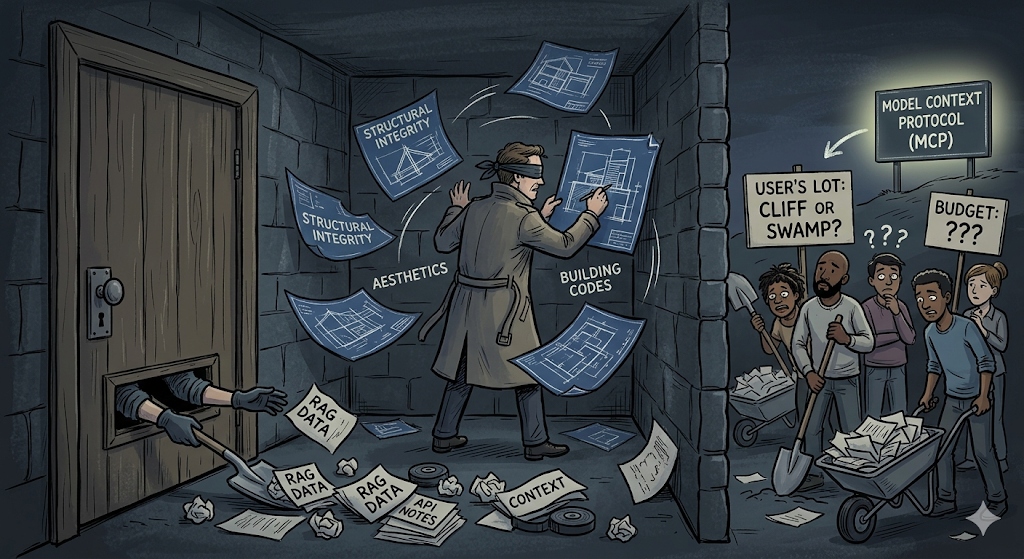

The Brilliant, Blind Architect

Imagine hiring the world’s greatest architect to design your house, then locking them in a windowless room. They know everything about structural integrity, aesthetics, and building codes, but they have no idea if your lot is on a cliffside or a swamp. They don’t know your budget, and they certainly don’t know that you hate open-concept kitchens.

To get anything done, you have to shovel notes under the door.

This is exactly how we treat LLMs today. We have “Intelligence” in a box, but it’s suffering from a Context Gap. It knows how to solve problems, but it can’t see the data it needs to solve your problems.

The Shovel Problem: RAG and Hardcoding

Up until now, we’ve tried to bridge this gap with two primary methods, both of which are starting to show their age:

- The Context Dump (RAG): We pre-index everything into vector databases and shove massive amounts of data into the prompt. It’s expensive, noisy, and often results in the model hallucinating because it’s overwhelmed by the “shovelful” of data we just threw at it.

- Hardcoded Tools: We manually write “glue code” for every single API endpoint we want the model to use. If you have ten data sources and three different AI agents, you’re stuck maintaining thirty bespoke integrations. It doesn’t scale.

From Integration to Discovery

The fundamental shift offered by the Model Context Protocol (MCP) is moving from Integration to Exposition.

In the “Integration” era, you are the active participant. You write the code that connects Point A to Point B. You handle the parsing, the auth, and the mapping.

In the “Discovery” era, your data becomes the active participant. By using a standardized protocol, your systems simply expose their capabilities and schemas. The model—acting as the “Host”—plugs into your “Server” and discovers what it can do. You aren’t building a bridge; you’re publishing a map.

The LSP for AI

The best way to understand MCP is by looking at the Language Server Protocol (LSP).

Before LSP, every IDE (VS Code, Sublime, Vim) needed a custom plugin for every programming language (Go, JS, Python). It was an N x M nightmare. LSP fixed this by creating a standard: a single Language Server can now talk to any IDE that speaks the protocol.

MCP is the LSP for data context. It decouples the “Intelligence” from the “Interface.” It doesn’t matter if your backend is written in Go or if your model is Claude or a local Llama instance. As long as they both speak MCP, the connection is instant.

Just-in-Time Context

The result of this shift is Just-in-Time Context.

Instead of moving all your data into a vector store and hoping for the best, the data stays where it lives—in your production databases and APIs. When a user asks a question, the model identifies the right “Tool” or “Resource” from the server’s manifest and fetches exactly what it needs, the moment it needs it.

It’s cleaner, faster, and infinitely more scalable.

Next Steps

This sets the stage. We’ve defined the “Why” and identified the “Context Gap.” In the next post, we’re going to get into the weeds of how this data actually moves across the wire, and why the choice between a local script and a persistent stream changes everything.