The SSE Advantage: Choosing the Right Transport for MCP

Article 2: The SSE Advantage — Choosing the Right Transport

In the first part of this series, we looked at how the Model Context Protocol (MCP) bridges the “Context Gap” by moving from hardcoded integrations to dynamic discovery. But once you decide to expose your data to an LLM, you’re faced with a fundamental engineering choice: How do you actually move the bytes across the wire?

MCP is transport-agnostic, meaning it doesn’t care if you’re talking over a local pipe or a satellite link. However, the transport you choose defines the limits of what your AI can actually do.

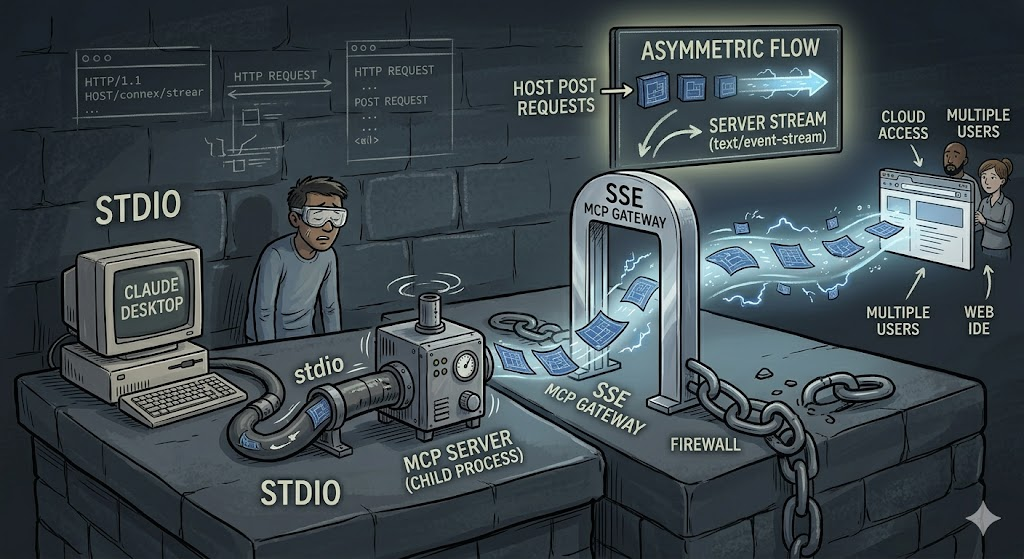

The Transport Crossroads: Stdio vs. SSE

There are two primary ways to run an MCP server today:

- Stdio: This is the “Hello World” of MCP. The host (like Claude Desktop) starts your binary as a child process and communicates via standard input and output. It’s incredibly fast and requires zero network configuration. It’s perfect for local-only tools, but it’s tethered to a single machine and a single user.

- SSE (Server-Sent Events): This turns your MCP server into a living, network-addressable service. If you want to build a tool that lives in the cloud, serves multiple users, or integrates with a web-based IDE, SSE is the architecture you need.

The Theory of Asymmetric Flow

The brilliance of the SSE implementation in MCP lies in its Asymmetric Flow.

Most developers reaching for “real-time” web tech instinctively think of WebSockets. But WebSockets are full-duplex and state-heavy, which is often overkill for AI workflows. SSE offers a cleaner, more resilient alternative by splitting the conversation into two distinct channels:

- The Inbound (Host → Server): These are standard HTTP POST requests. When the LLM decides to call a tool or fetch a resource, it sends a discrete JSON-RPC command.

- The Outbound (Server → Host): This is a long-lived, unidirectional stream. The server “pushes” events, heartbeats, and data back to the host over a persistent connection.

Why SSE Beats WebSockets for AI

Why opt for SSE over the more common WebSocket approach? In the context of LLMs, SSE is the “Goldilocks” transport for three specific reasons:

- The “Think-Time” Resilience: LLMs don’t stream data in a constant bi-directional flurry. They receive a request, “think” for several seconds (or longer), and then act. SSE handles these idle periods gracefully. It’s designed for persistent connections that don’t need constant “chatter” to stay alive.

- Firewall Friendliness: SSE is just “enhanced” HTTP. It doesn’t require the complex protocol “handshake” or “upgrade” that WebSockets do, meaning it passes through load balancers, proxies, and enterprise firewalls that often choke on socket connections.

- Native Reconnection: The protocol has built-in support for reconnections. If the network blips, the client knows exactly how to pick the stream back up without the developer having to write complex “retry” logic in Go or JS.

The Handshake: Establishing the Link

To understand the data flow, you have to understand the handshake. It’s a three-step dance that ensures the Host knows exactly how to talk to the Server:

- The Connection: The Host makes a GET request to the server’s

/sseendpoint. - The Stream: The Server responds with

Content-Type: text/event-streamand keeps the connection open. - The Instruction: The Server immediately pushes an

endpointevent. This event contains a unique URL that tells the Host, “Whenever you want to send me a command, POST it here.”

By separating the “listening” channel (the stream) from the “command” channel (the POSTs), MCP over SSE creates a robust, distributed system that can scale far beyond a single local machine.

What’s Next?

We’ve established that SSE is the superior architecture for “Context-as-a-Service.” But here’s the catch: building this from scratch—handling the event-buffering, managing session IDs, and ensuring data integrity—is a massive engineering lift.

In the next post, we’ll dive into the Engineering Tax of building your own MCP server in Go and JS, and why most developers get stuck in the “glue code” phase.